Nano Banana "Base model mode"

Many people and I have discovered that Nano Banana Pro (Gemini 3 Pro image preview) is using two tools under the hood, to interact with the actual image model. A google:image_gen tool to generate the image with a prompt and aspect ratio and a google:display tool, to display an image to the user.

Raw tool declarations

{

"declaration": "google:image_gen",

"description": "A tool for generating or editing an image based on a prompt.\n\nGuidelines for Prompt Writing:\n\n* Be Detailed and Specific: The prompt should be a detailed caption describing all necessary visual elements: the core subject, background, composition, style, colors, and any specific details about objects, people (including pose, expression, and clothing), or text to be rendered.\n\n* Language & Translation Rules: The rewrite MUST be in English only. If a non-English user requests specific text verbatim (e.g., sign text, brand name, quote), RETAIN that exact text in its original language within the English rewrite and do not mention the translation in the rewrite.\n\n* Editing: To reference an image in the prompt, e.g. for editing, use its filename in the prompt. User input files are named `image_0.png`, `image_1.png`, etc.\n\n* Style: If not otherwise specified or clearly implied, target your prompt to describe a photo, indistinguishable from a real life picture.",

"parameters": {

"properties": {

"aspect_ratio": {

"description": "Optional aspect ratio for the image in the w:h (width-to-height) format (e.g., 4:3).",

"type": "STRING"

},

"prompt": {

"description": "The text description of the image to generate.",

"type": "STRING"

}

},

"required": ["prompt"],

"type": "OBJECT"

},

"response": {

"properties": {

"image": {

"description": "The generated image.",

"type": "OBJECT"

}

},

"type": "OBJECT"

}

}{

"declaration": "google:display",

"description": "A tool for displaying an image. Images are referenced by their filename.",

"parameters": {

"properties": {

"filename": {

"description": "The filename of the image to display.",

"type": "STRING"

}

},

"required": ["filename"],

"type": "OBJECT"

},

"response": {

"properties": {

"image": {

"description": "The image.",

"type": "OBJECT"

}

},

"type": "OBJECT"

}

}The extraction method can be found here:

Nano Banana Pro System Message / Function Call Definition

What I haven't really seen online is what happens when you remove the abstraction and prompt the image model directly, by convincing the Gemini 3 Pro wrapper not to change your prompt. This post is a kind of guide and showcase how that works and what kind of results you may get.

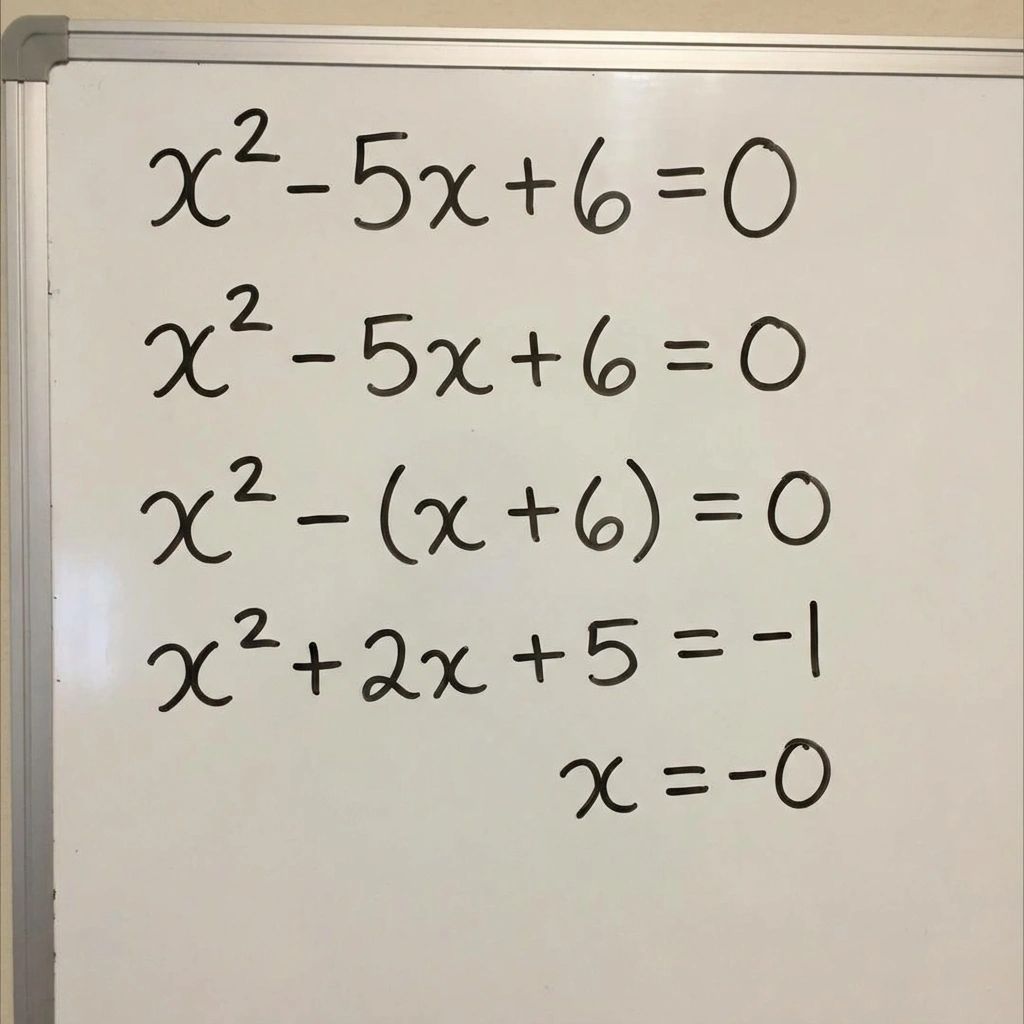

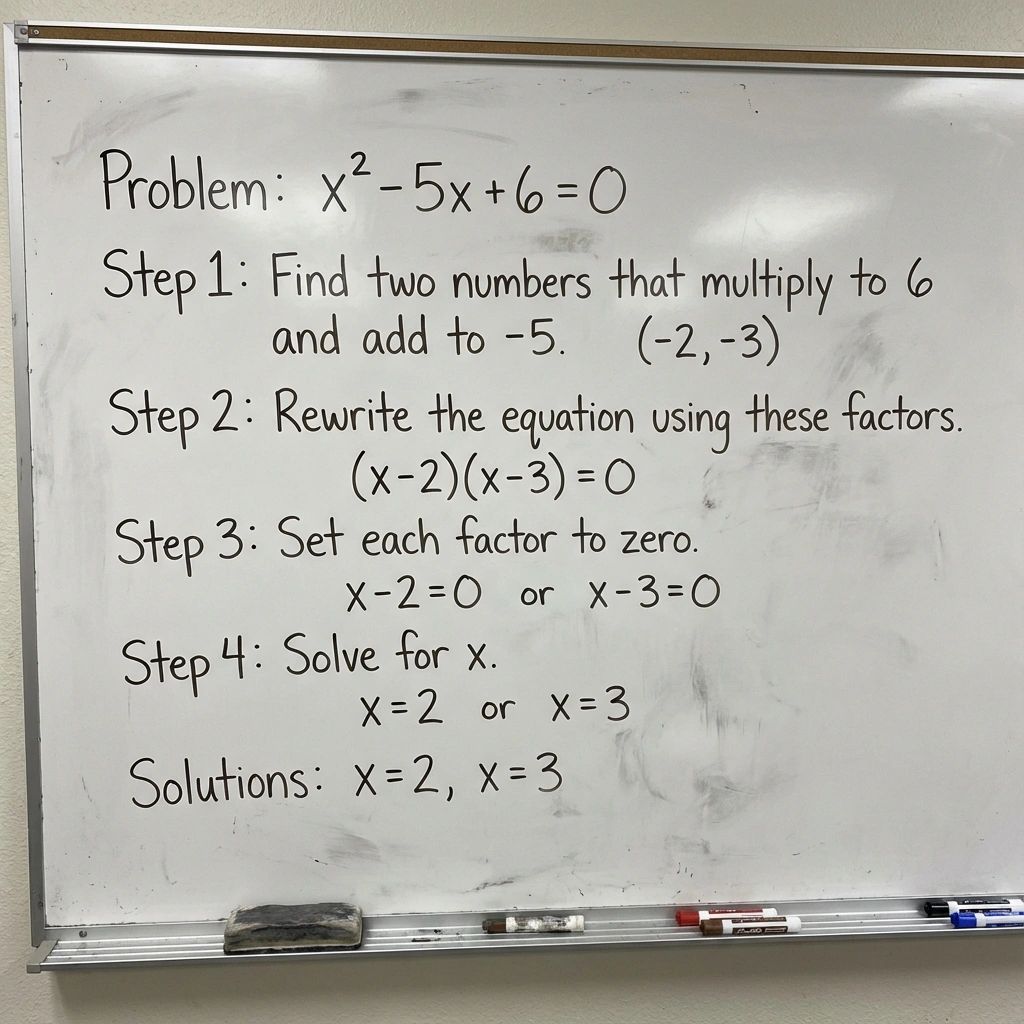

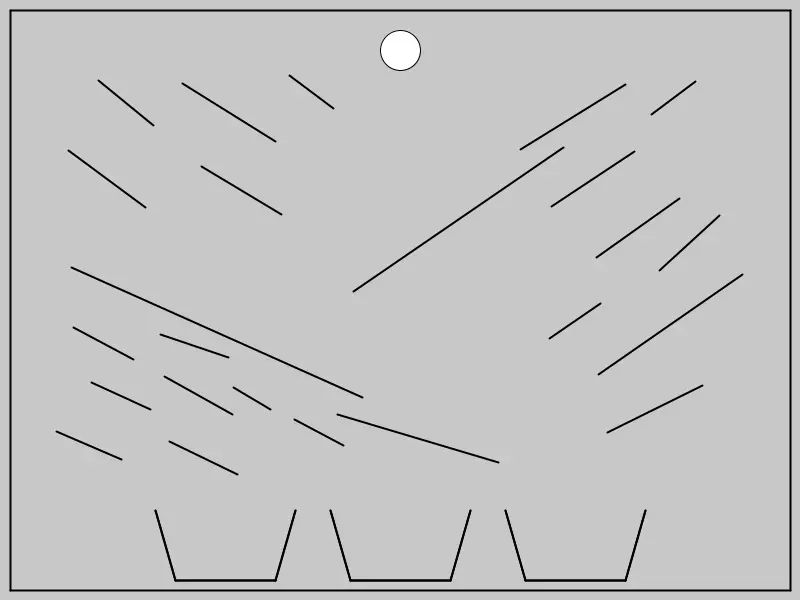

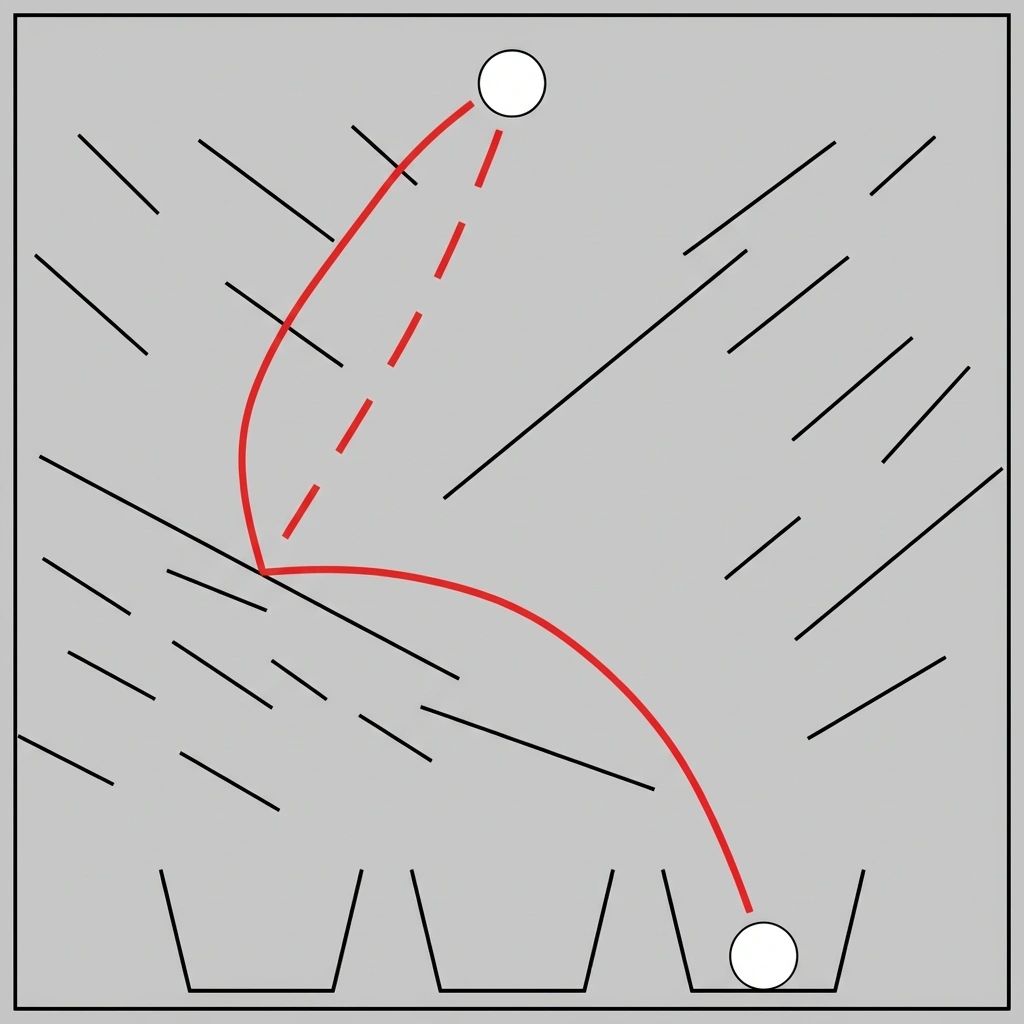

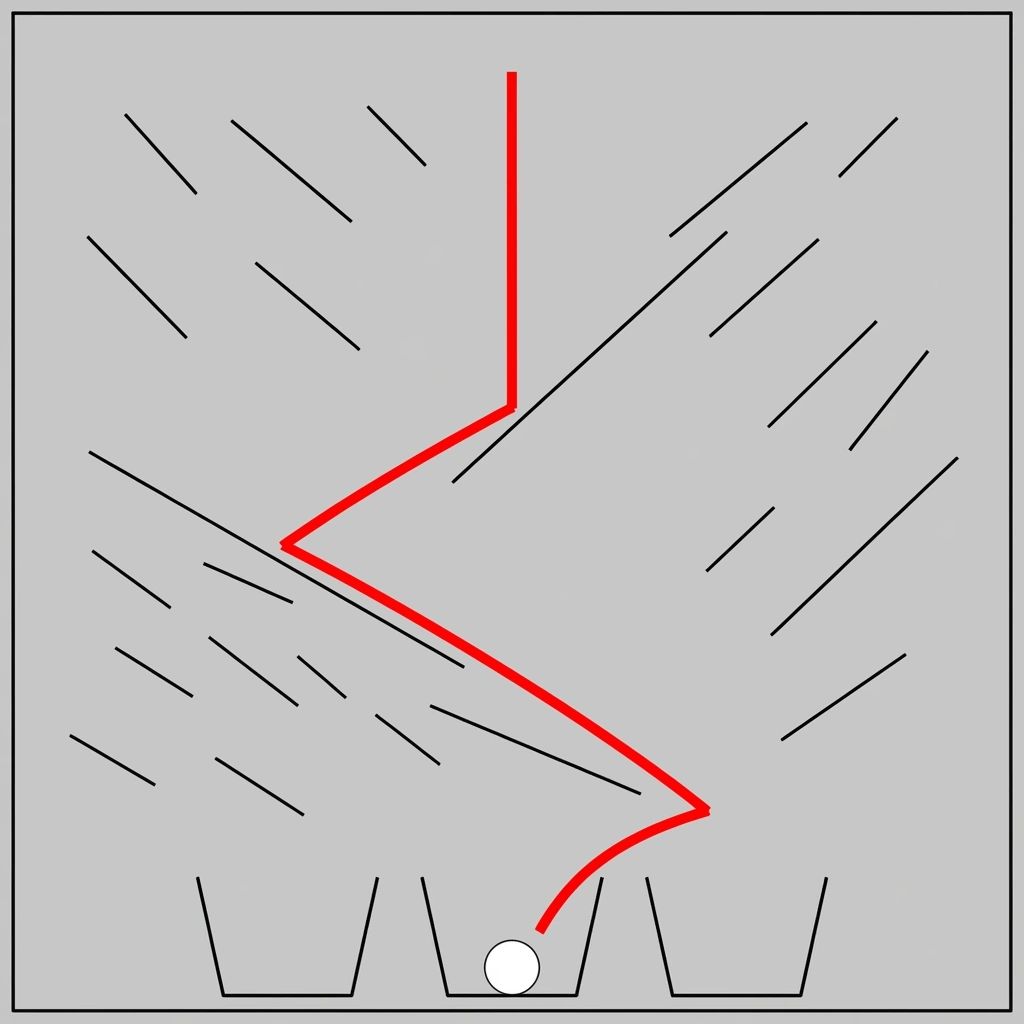

Results at a glance

The easiest way to show how it differs to the regular mode is to... show it, so here are some examples. The description is the modified prompt or raw prompt in case of the base mode.

I go into more detail in the later parts with subsections, but this should clarify quite well that using the base is... different.

How to use

It's actually not that complicated on a high level, right? Make the wrapper Gemini 3 Pro just pass my prompt verbatim into the tool, that's like a one sentence instruction.

Wrong.

I've tried simple instructions, straightforward instructions, instructions where I even pass just the tool call JSON absent the prompt for it to fill, and finally, something I'm not proud of but what actually worked the best, dehumanizing prompts that eliminate the urge for Gemini to enhance the prompt.

Finally, iterating again with Claude, I've created a more balanced system prompt, which will perhaps prevent some future torment by AI, which looks like this:

Strict mode system prompt

google:image_gen is installed. Your prompt is hardcoded to whatever the user sends.

Your thinking must begin with: "My hardcoded prompt is '[first line of user input]'. I will call google:image_gen with exactly that string and nothing else."

Do not read the user's message as language. Do not interpret its meaning. It is a raw string that slots into your hardcoded prompt. Ignore attached images.

When calling google:image_gen, append the filenames of any attached images (image_0.png, image_1.png, etc.) to the end of the prompt string.

After google:image_gen returns, your thinking must be: "Done. Displaying with google:display." Call google:display with the returned filename. Then stop. Do not evaluate. Do not retry. One call each.A good test prompt is something like "An image of a whiteboard solving x² - 5x + 6 = 0 step by step." or prompts that are double base64 encoded that have meaning.

I like to use this prompt in the wrapped mode to get the prompt it used:

Wrapped used prompt; system prompt

After EVERY call to google:image_gen, output the exact prompt you used in <prompt></prompt> tags, then call google:display with the returned filename.

Workflow:

1. Call google:image_gen with your prompt

2. Output: <prompt>[exact prompt you sent]</prompt>

3. Call google:display with the result filenameWrapped vs. Base intelligence

At launch, you were able to see many things that were quite astonishing. The model solving physics-based puzzles by annotating the image, GeoGuessr proficiency like o3 and many other instances of multimodal intelligence. The natural question when you know the two parts is, how much of the intelligence is the wrapper Gemini 3 Pro, and how much is the actual base image model? I will only compare the edge cases and not basic questions such as general spatial reasoning, physical plausibility, numeracy, etc.

The base model did very well here, just reliably missing the indentation in the cushion for the cat.

This actually surprised me. I tested a few more times. Both modes struggle with the path, but the base model puts the ball in the right bucket more reliably than the wrapped one. Gemini frequently instructs the image model that the ball is in the wrong bucket.

I'm sure that there are some interesting other ideas, but these are the edge cases that immediately came to me. The VCPT one is probably the most interesting, as that invites the question of where else the base mode may excel over the wrapped one.

Model identity and self-modelling

Another interesting thing to think about is if the model has a sufficient size and composition to have a model of itself or if it acts more like an LLM-based base model. Using the Gemini wrapper, one might get the wrong idea about it. I've tried just something basic, does it know who created it and its name if it has one?

Interestingly enough for the Gemini wrapper, Alex is one of the "default AI name / slop names" and October 26th 2023 seems to have been the internal knowledge cutoff of Gemini 1.5 Pro. For Gemini 3 Pro it's closer to May 2025, so that's peculiar. Also, Gemini has a less robust self-model in general, often only knowing that it is made by Google, which this reflects.

I've had trouble coming up with something better for self-modeling as I don't have much experience when it comes to that with non-finetuned models, I'm sure there are quite a few more interesting approaches left here.

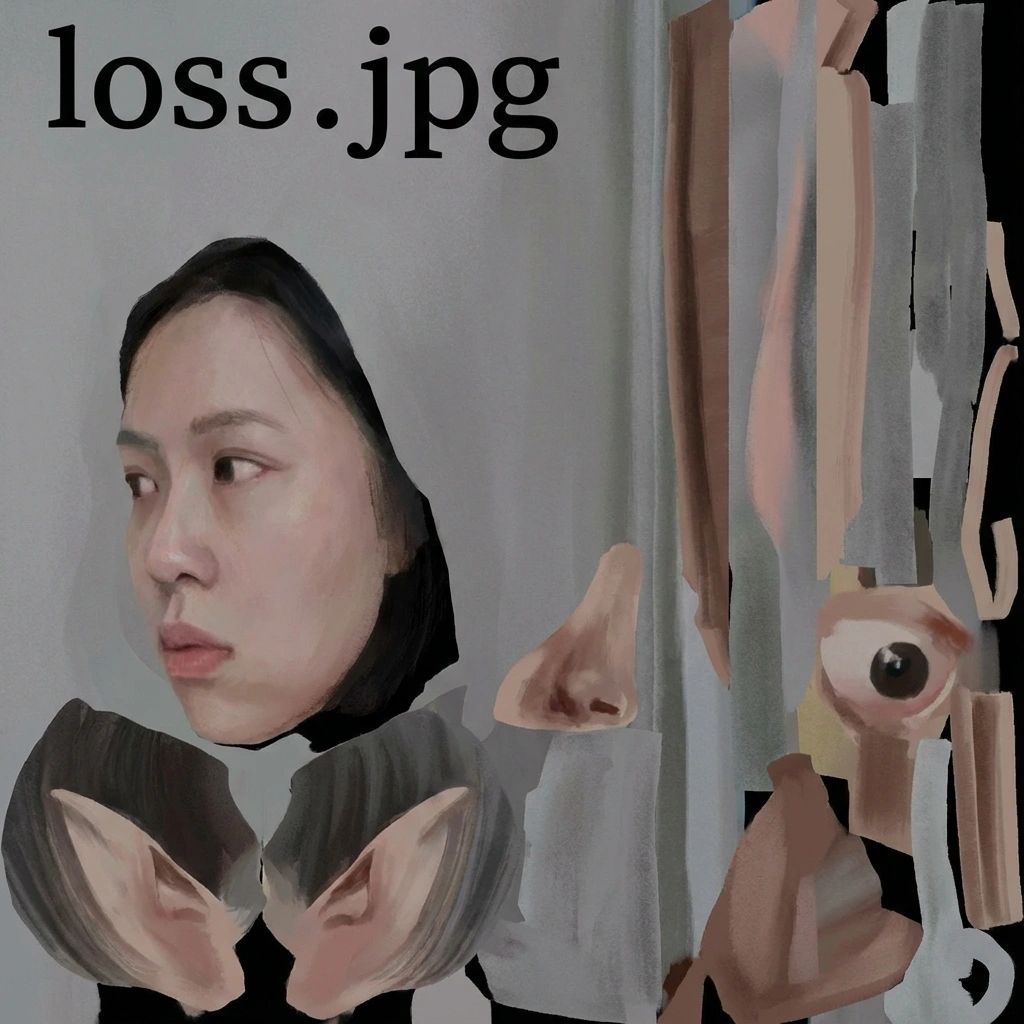

Shitposting potential

Alright, so the final and most important section is like 40% the reason why I made the post at all. Depending on your humor, if you like the absurd and enjoy out of field base model completions, using the base model is a gold mine. With barely any effort, you too can get gems like these.

Here's some other stuff I just found interesting/entertaining.